Confidence in classification

Expected Confidence SCORE (degree of certainty for intent assignment)

For each of the classified phrases, the model provides the expected confidence score, i.e. a result reflecting the degree of certainty when assigning a given intent. So if this result is high (above or around 0.8-0.9), the model can be said to be fairly confident that the class prediction is correct. It will always be between 0 and 1.

However, whenever we are sure that we are right, are we actually right? :)

Remember that we should not fully trust the model. For this reason, an experimentally derived thresholds are established, i.e. we set a de facto degree of certainty for which the phrases are correctly assigned. This threshold in the case of our engine is currently set to 0.4.

0.4 seems like a good threshold also because two intents for the same phrase rarely get a score above this value.

Checking confidence score

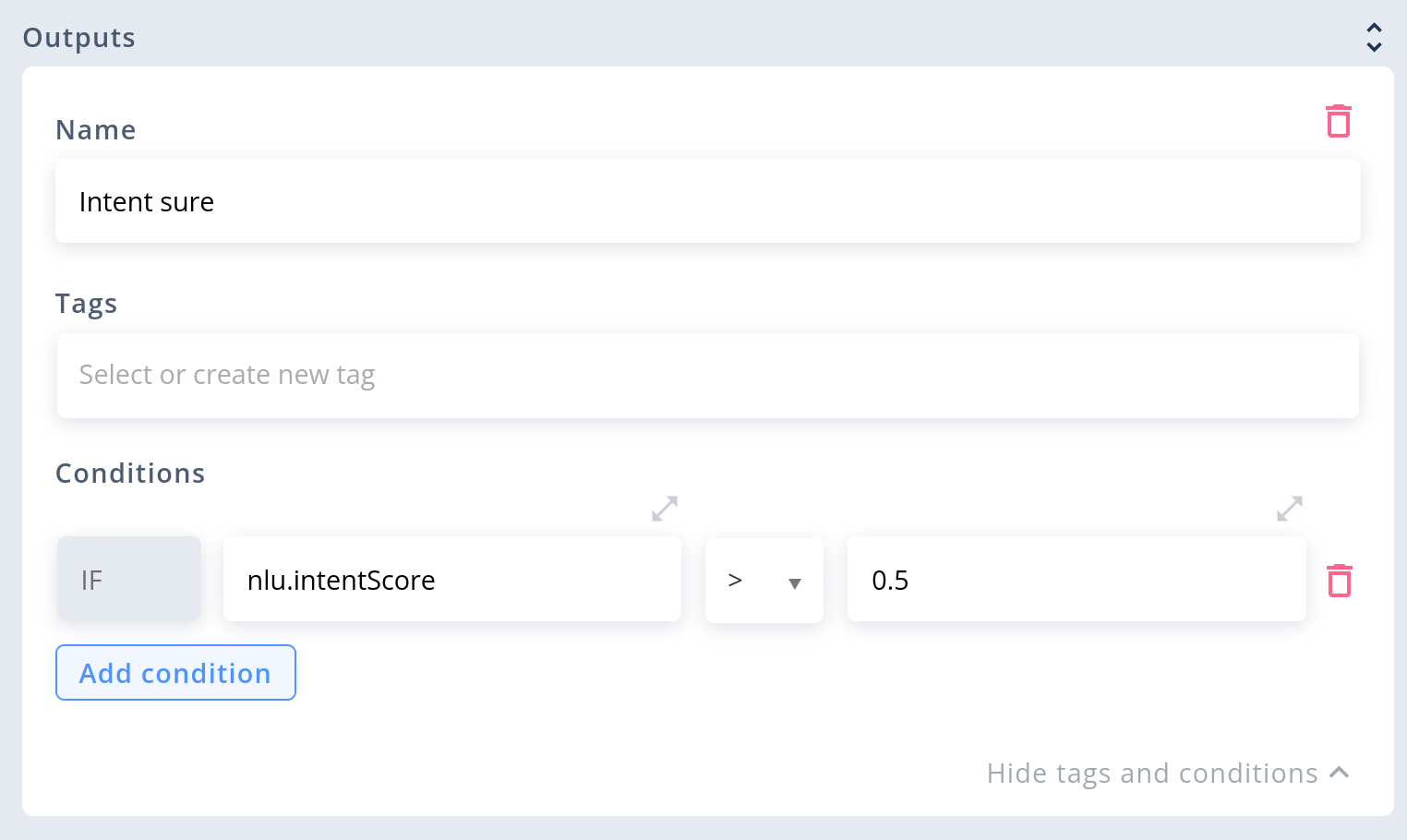

You can use system variable nlu.intentScore to check in given block you NLU result is relevant for you. For example at certain point you need to be more confident that intent in user utterance is correct one.

Example of checking intent score in Say block

Setting confidence score

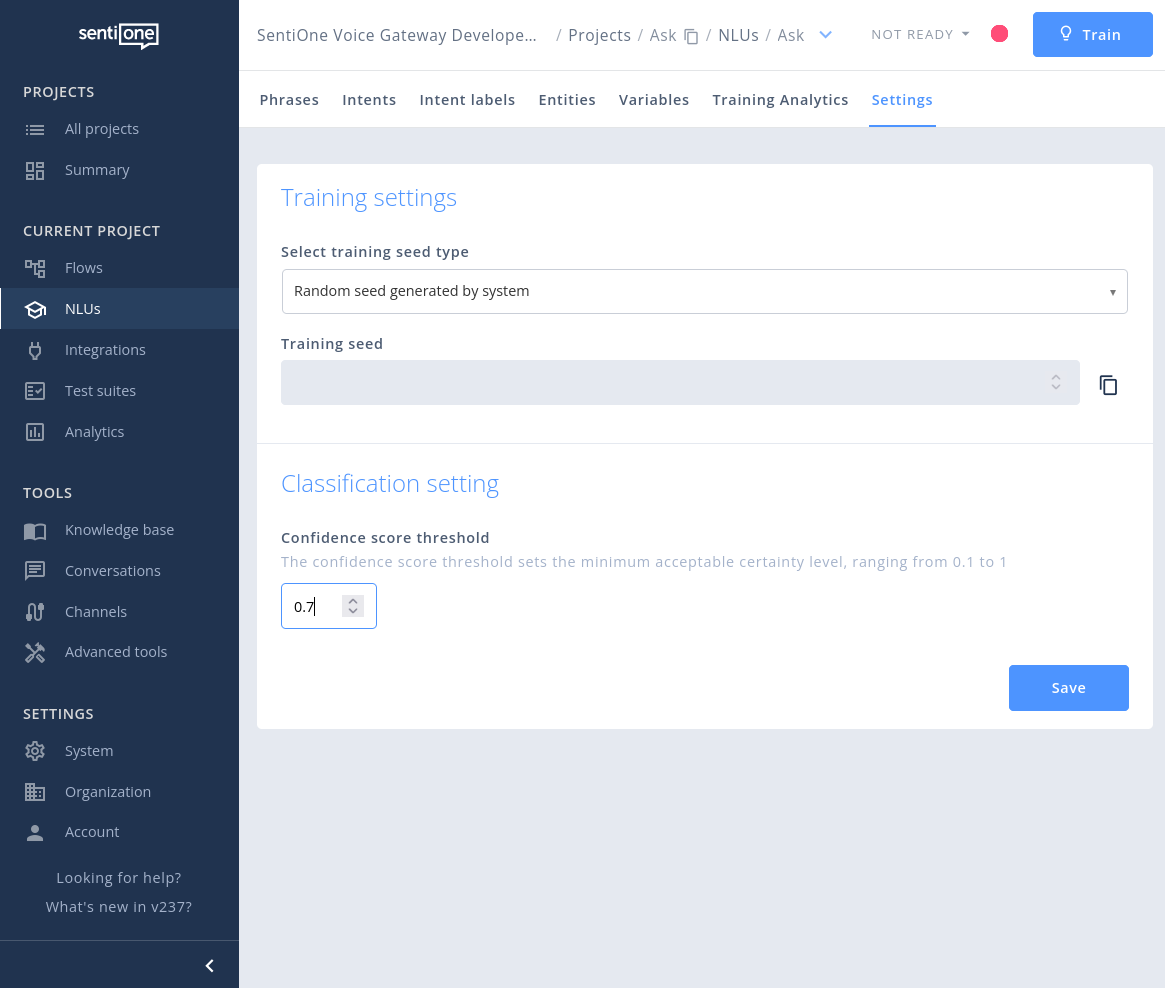

You can define score for whole NLU in Settings tab...

NLU settings form

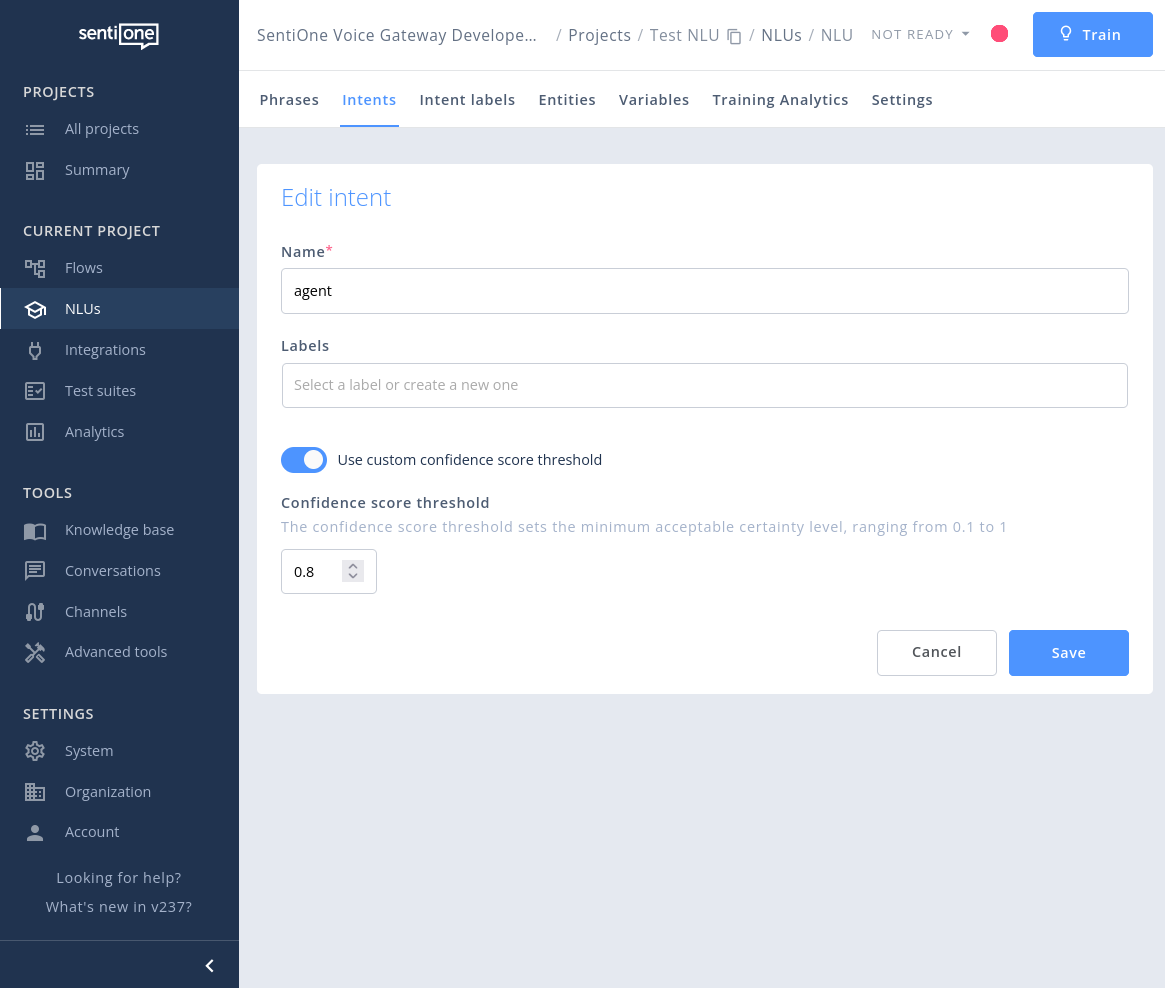

...or for single intent:

Intent edit form

Please note that confidence score thresholds are applied instantly and do not require NLU training.

Updated 7 months ago